Ethical AI Adoption

Change Management for Ethical AI: Turning Principles into Lasting Adoption

Most organizations have endorsed responsible AI principles. The hard part is not writing the policy. The hard part is making fairness, transparency, and accountability visible in daily decisions. Change management is what closes that gap.

Ethical AI adoption is the measurable point at which an organization's workforce consistently applies AI governance principles without external compliance pressure. Unlike governance frameworks that stop at policy, IMA Worldwide's AIM applies behavioral adoption practices to ethical AI, building the sponsorship and reinforcement systems that make compliance permanent rather than performative.

The Implementation Gap

Why Ethical AI Principles Fail at Implementation

The research on responsible AI consistently points to the same conclusion: principles do not produce behavior. Deliberate change management does.

Organizations spend months selecting, configuring, and deploying AI tools alongside ethics frameworks. Then adoption stalls. Users revert to old habits. Leaders declare success at go-live and move on. The behaviors never change. An AI governance document that defines roles, ethics standards, and compliance requirements is installation. Getting people to actually perform those roles, follow those standards, and comply consistently is implementation.

Skipping the people side of ethical AI does not just slow adoption. It creates risk. Biased models that go unchallenged harm real people and attract regulatory scrutiny. Unexplainable decisions erode user trust and invite legal exposure. When no one owns AI outcomes, errors compound quietly until they surface publicly. As rules tighten under frameworks like the EU AI Act and NIST AI RMF, the cost of reactive compliance far exceeds the cost of building behavior change into the rollout from the start.

Failure Pattern 1

Principles Without Behavioral Definitions

Stating that AI must be "fair" or "transparent" creates no obligation on any specific person to do anything specific. Without observable behavior definitions, ethics governance produces documentation that nobody acts on and auditors cannot verify.

Failure Pattern 2

Sponsorship That Is Endorsement, Not Action

A senior leader who approves the ethics policy but does not personally model ethical AI practice sends the signal that principles are aspirational. Active sponsorship means demonstrating the behavior, not just approving it. Sponsorship accounts for 30 to 50 percent of implementation success (IMA implementation research).

Failure Pattern 3

Reinforcement Disconnected from Ethics Behaviors

If teams complete ethics training but still skip bias audits before deployment, the reward system is not connected to the real behavior. Until governance behaviors show up in what gets measured and recognized, the ethics policy exists on paper and nowhere else.

Failure Pattern 4

One-Size Governance Across Different Roles

A governance policy that applies the same approach to data scientists, front-line users, and executive sponsors will not work for any of them. What ethical AI practice looks like for a model development team is completely different from what it looks like for a business analyst using AI outputs daily.

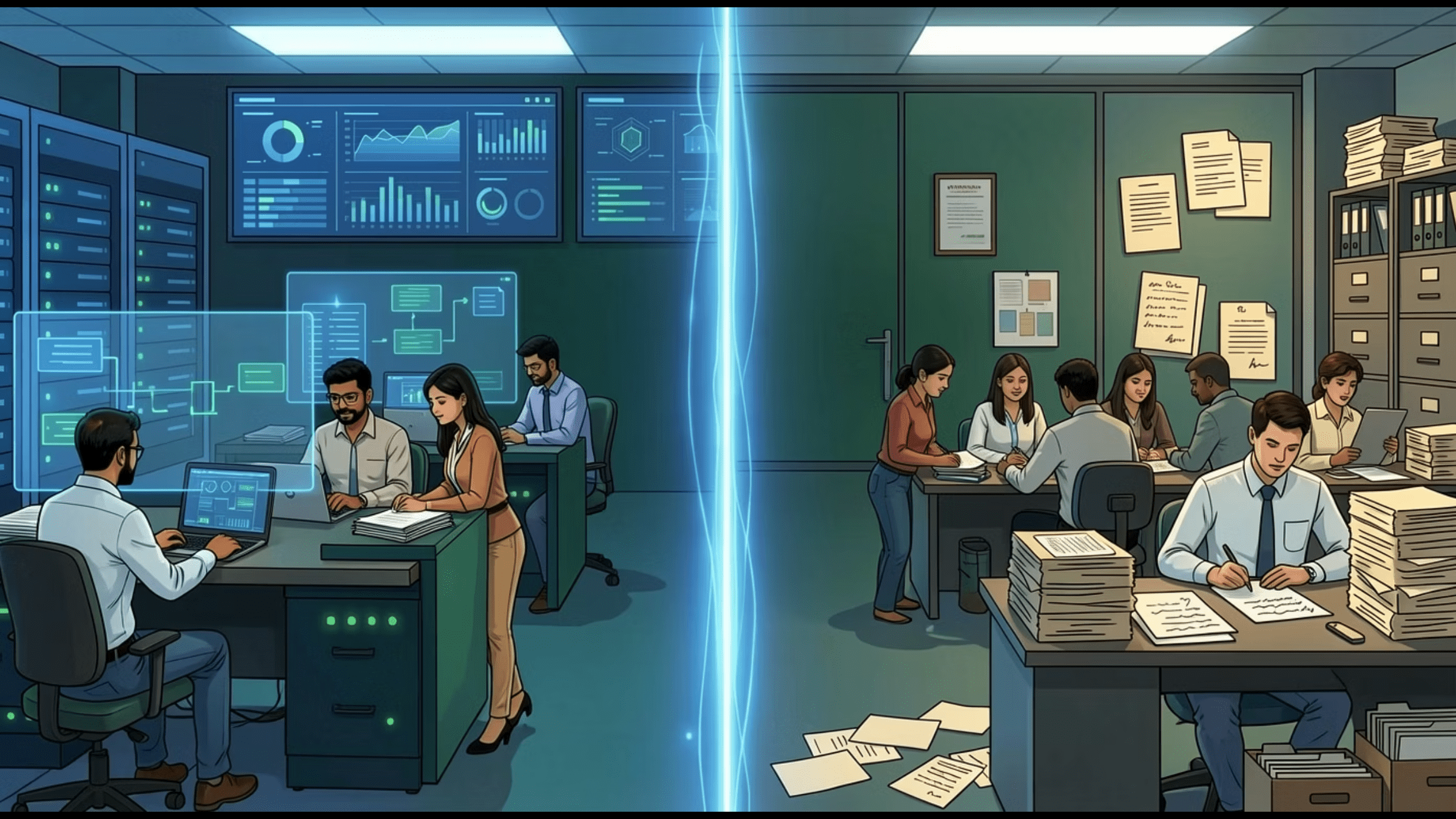

Behavior vs. Policy

Behavioral Adoption of AI Ethics

Policy compliance checks that a box is ticked. Behavioral adoption means ethics principles are visible in the daily decisions of data scientists, managers, compliance officers, and executives. The right question at every stage is not "does the policy exist?" but "did behaviors change?"

Ethical AI Policy Compliance: Installation

- Ethics policy document published

- Ethics training completion logged

- Responsible AI committee established

- Governance framework submitted to leadership

- Audit checklist completed at go-live

- Bias testing run once before deployment

Ethical AI Behavioral Adoption: Implementation

- Data scientists run bias audits before every deployment

- Managers challenge unexplained AI recommendations

- Compliance officers escalate findings without being asked

- Leaders model ethical AI practice in their own decisions

- Governance behaviors are measured in performance reviews

- Reinforcement audits confirm rewards align with ethics goals

IMA Worldwide's AIM (Accelerating Implementation Methodology) four foundations applied to ethical AI: define the specific, visible behaviors that ethical AI requires for each role; identify whose daily work must change before designing training or governance plans; connect reinforcement to the moment of ethical decision, not to training completion; and map influence by trust and daily interaction rather than by formal authority alone.

Adoption Requirements

Fairness, Transparency, and Accountability as Adoption Requirements

Fairness, transparency, and accountability are not just ethical principles. They are adoption requirements. When AI systems produce unexplained decisions or unfair outcomes, users lose trust in the tools and stop using them. Change management builds these principles into daily behavior, not just policy documents.

Principle One

Fairness

Bias in AI outputs destroys user trust and stalls adoption before it starts. Fairness requires specific behaviors from specific people: data scientists who test models across groups before deployment, managers who escalate bias findings rather than dismiss them, and leaders who reinforce that fairness checks are non-negotiable.

AIM Application: Without change management, fairness stays a principle on paper. With it, fairness becomes a behavior pattern that people perform every day because it is what gets measured and rewarded.

Principle Two

Transparency

People do not adopt AI tools they cannot understand or explain to others. Transparency means users can explain how a decision was reached, auditors can review model logic, and managers can answer when teams ask why the AI recommended something.

AIM Application: Change management makes transparency a daily practice by defining what clear explanation looks like for each role, training people on model documentation, and reinforcing the habit of checking before acting on AI outputs.

Principle Three

Accountability

When no one owns AI outcomes, problems get buried and adoption erodes. AIM's role structure creates the ownership layer that AI accountability requires. Sponsors own the adoption outcome. Change agents own the adoption process. Targets own their behavior change.

AIM Application: Without this structure, accountability spreads too thin and AI errors go uncorrected long enough to damage trust permanently. Clear role ownership makes ethical AI governable, not just aspirational.

Leadership & Sponsorship

Leadership Accountability for Ethical AI

Leaders have six non-delegable tasks in ethical AI adoption: establish the business case for responsible AI personally, participate in setting adoption goals, allocate real resources for change management, align reward systems to ethical behaviors, build the sponsorship cascade through every management layer, and monitor adoption progress directly. Passive endorsement of a governance policy is not sponsorship. Active sponsorship means personally demonstrating that ethical AI practice is observable, expected, and rewarded.

Role Type 1

Sponsors

Leaders whose organizational authority makes ethical AI real. Sponsors explain the business case for responsible AI personally, demonstrate ethical AI practice in their own decisions, and reward teams that surface governance concerns early. They cannot delegate this role. When they do, ethical AI becomes optional.

Role Type 2

Change Agents

Practitioners trusted by both sponsors and the front-line people whose work must change. That two-way trust is essential. Change agents translate ethical AI strategy into local action, coach teams on governance behaviors, and identify resistance before it hardens into patterns that undermine the entire effort.

Role Type 3

Targets

The people whose daily decisions must change for ethical AI to be real. In AI adoption, targets include data scientists running audits, managers reviewing model outputs before acting, and compliance staff tracking regulatory requirements. Everyone is a Target first, including the executives sponsoring the effort.

Everyone is a Target first, including executives. Before a leader can effectively sponsor ethical AI change, they must go through their own adoption journey. Leaders who require governance behaviors they do not perform themselves damage credibility and create resistance throughout the organization. A sponsor who has not personally adopted ethical AI practice cannot model the behavior they are asking others to perform.

Governance Implementation

What Are the Six Steps to Make AI Governance Actually Work?

AI governance frameworks fail for the same reason AI rollouts fail: they produce policies without producing behavior change. AIM gives governance efforts the behavior-change structure needed to move from documents to daily practice.

- Define the Behavior Changes Governance Requires. Every governance requirement translates into a specific behavior someone must perform. Replace "use AI ethically" with observable actions: "Run a bias check before each model goes live and document the result." The clearer the behavior, the easier it is to train, reinforce, and audit.

- Identify Who Must Change First. Name the targets before mapping sponsors or change agents. Governance targets are the people whose daily work must change: data scientists running audits, managers reviewing model decisions, compliance staff tracking regulatory requirements. Governance without target identification produces rules nobody owns.

- Connect Reinforcement to Governance Behaviors. If teams complete ethics training but still skip bias checks before deployment, the reward system is not connected to the real behavior. Ask: what consequence follows when someone skips a governance step? What recognition follows when someone catches a problem early? Connect both to the specific behavior, not to compliance completion.

- Map Roles by Real Influence, Not Org Chart. The person most likely to shift a team's governance behavior is often a respected technical lead or senior peer, not the compliance officer. Identify who truly influences each target group and build the sponsorship and change agent plan around those people, not just formal authority.

- Apply AIM's Process to the Governance Rollout. Governance is itself a change effort. It needs a business case for action, target identification, sponsorship, training, and reinforcement. AIM's process gives governance teams the structure to manage the people side of compliance, not just the policy side.

- Localize Governance to Each Team's Context. A governance policy that applies the same approach to data scientists, front-line users, and executive sponsors will not work for any of them. Build implementation plans around the specific resistance, workflows, and influence patterns of each group.

Reinforcement & Measurement

Reinforcement Systems for Responsible AI Use

Keeping ethical AI compliance consistent requires behavior-based tracking and steady reinforcement. Measurement must begin at the start of the rollout, not after problems surface. Communication about ethical AI change has a 1x impact. Modeling has a 2x impact. Reinforcement has a 3x impact.

| KPI | What It Measures | Priority |

|---|---|---|

| Reinforcement Audits | Review what leaders reward to confirm incentives match ethical AI goals. If promotion criteria do not mention governance behaviors, the reinforcement system is misaligned. | High |

| Sponsorship Assessments | Verify that leaders are personally performing the express, model, and reinforce behaviors that sustain ethical AI practice, not just endorsing the policy. | Medium |

| Readiness Assessments | Identify where resistance or fatigue could slow ethical AI adoption and diagnose which of the five readiness elements is missing for each target group before designing a response. | High |

| Behavioral Indicators | Track whether bias audits are completed before deployment, whether managers review AI outputs before acting, and whether AI-generated decisions are documented for audit. Not just training completion. | High |

| Targeted Reinforcement Index | Measure whether recognition and rewards actually support the desired ethical behaviors rather than rewarding speed and output at the expense of governance compliance. | Medium |

Compliance is not a one-time box to check. It requires steady reinforcement, monitoring, and course correction. Train staff regularly, run ethics impact assessments, and record governance decisions. AIM's behavior-change approach gives compliance teams a repeatable structure for sustaining the right behaviors over time, not just at launch. Human oversight must be ongoing. Do not set up a governance framework and then leave it unattended. Oversight is not a governance layer. It is a behavior pattern that must be reinforced like any other.

Frequently Asked Questions

FAQ: Change Management for Ethical AI

Why do ethical AI principles fail at implementation?

Ethical AI principles fail at implementation because they produce policies without producing behavior change. Organizations endorse fairness and transparency statements, then deploy AI without the governance structures, reinforcement systems, or behavioral definitions needed to make those principles observable in daily decisions. Principles are installation. Behavior change is implementation.

How does behavioral adoption of AI ethics differ from policy compliance?

Policy compliance checks that a document exists and a box is ticked. Behavioral adoption means a data scientist runs a bias audit before every model goes live, a manager challenges an unexplained AI recommendation, and a compliance officer escalates a finding without waiting to be asked. Ethics lives in repeated daily decisions, not in governance documents.

What is the leadership role in ethical AI adoption?

Leaders have six non-delegable tasks in ethical AI: establish the business case for responsible AI personally, participate in setting adoption goals, allocate real resources for change management, align reward systems to ethical behaviors, build the sponsorship cascade through every management layer, and monitor adoption progress directly. Passive endorsement is not sponsorship.

How do you build reinforcement systems for responsible AI use?

Reinforcement for responsible AI connects rewards to the specific behaviors that governance requires: catching a bias issue early, flagging an unexplainable model output, documenting a decision for audit. Performance criteria must include ethical AI behaviors. Governance reviews and compliance checkpoints must reward early detection, not just punish failure after problems surface.

How do you measure ethical AI adoption rather than policy existence?

Measure behavior, not documents. Track whether bias audits are being completed before deployment, whether managers review AI outputs before acting on them, whether reinforcement audits confirm that what leaders reward aligns with ethical goals, and whether readiness assessments identify resistance before it hardens. Policy existence is installation. Behavioral consistency is adoption.

How does the AIM methodology address ethical AI challenges specifically?

AIM addresses ethical AI by defining governance requirements as specific observable behaviors, identifying the target groups whose daily decisions must change, building sponsorship structures where leaders personally model ethical AI practice, and establishing reinforcement systems where governance behaviors are measured and rewarded. Everyone is a Target first, including executives responsible for ethical AI oversight.

Related Reading

More from the AI Change Management Hub

Turn Ethical AI Principles into Observable Daily Practice

Ethical AI policies without change management produce governance on paper and risk in production. The Accelerating Implementation Methodology (AIM), a behavior-first approach to implementation, closes the gap between responsible AI principles and the behaviors that make them real.